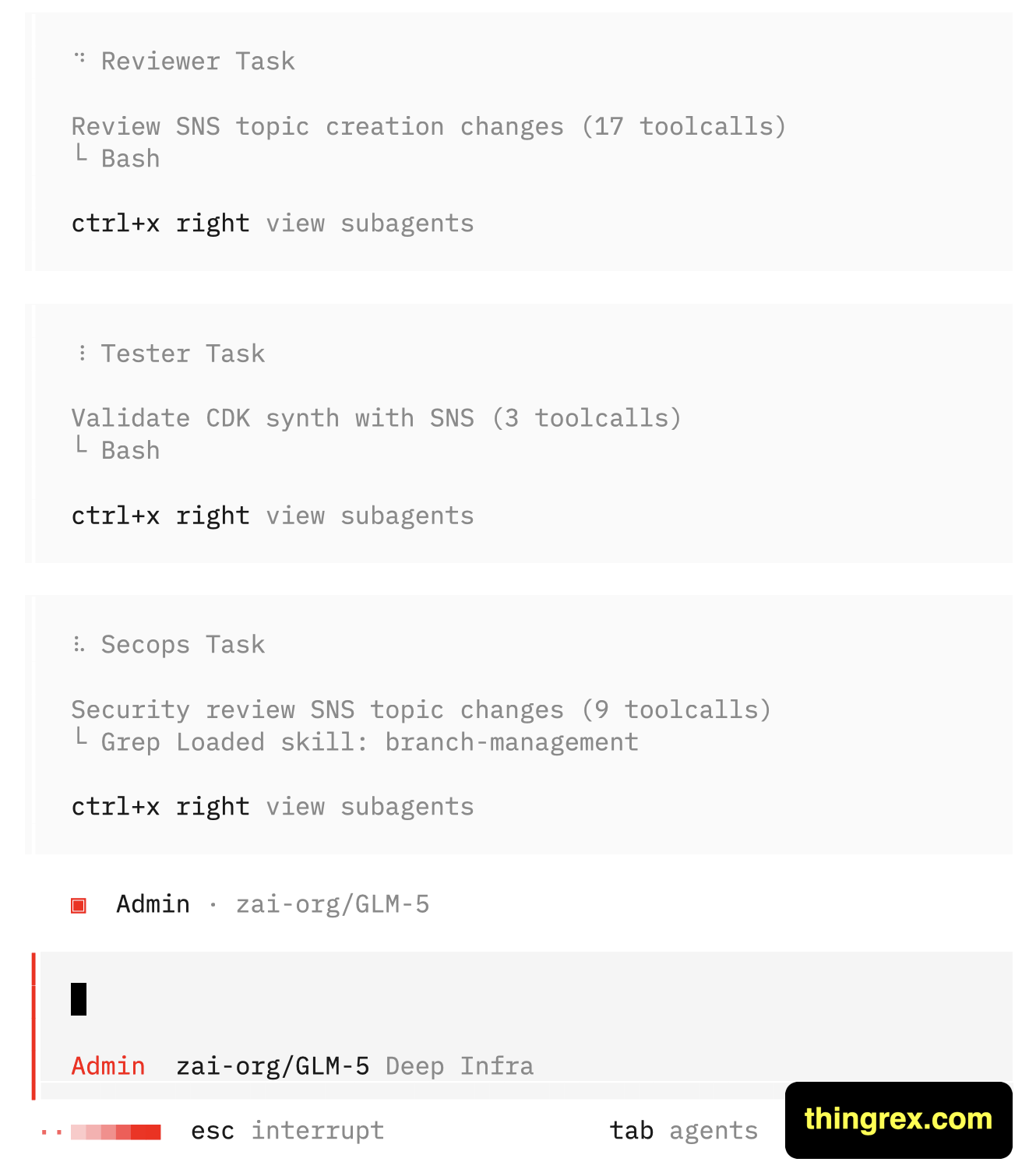

Today, an AWS infrastructure management agent went down the wrong path. When I realized that, I was about to jump in and terminate the execution. Before I managed to smash the 'Esc' button, the Reviewer Agent caught it and course-corrected his AI colleague.

The fix happened automatically. My team of agents self-corrected without my intervention.

The root cause? My initial prompt wasn’t precise enough. I was in a hurry, and I assumed they’d read between the lines and fill in the details I neglected to describe.

I am familiar with the same scenario, way more than I would like to be, when it comes to the business requirements.

Your team will “generate some results” - but if the requirements aren’t precisely defined, those results might be totally off-target.

The business owner thinks: “They’ll figure it out."

The team thinks: “We delivered what was asked to the best of our understanding and capabilities."

Result: Wasted effort, misaligned expectations, everyone gets disappointed.

The fix is simple but not easy:

- Design your teams - including AI teams - to self-correct.

- Build review steps into the workflow.

- Make it safe to push back and ask clarifying questions.

- Catch misalignment early, not after delivery.

That is exactly what I help business owners to achieve: distill vague ideas into clear, actionable, measurable requirements; then empower team to deliver.

👉 If you could use that, drop me a message.